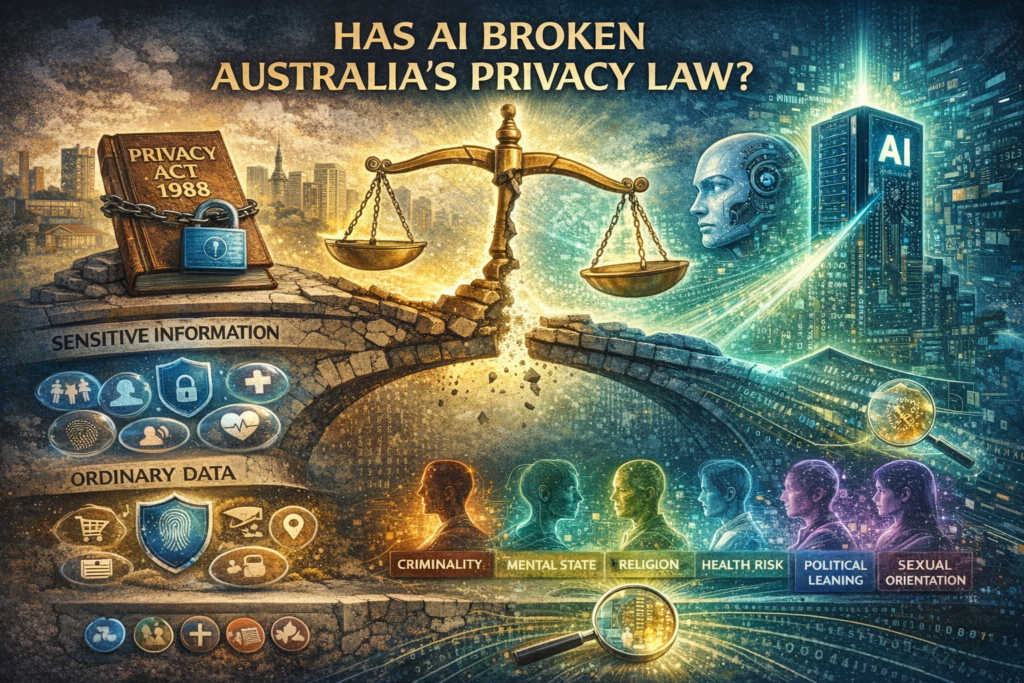

Australia’s Privacy Act 1988 (Commonwealth) was built for a simpler age. It has grown since then, but has it matured? It protects certain categories of “sensitive information”: race, religion, political opinions, health data, more strictly than ordinary data like shopping habits or CCTV footage. The assumption is tidy and intuitive: some data is inherently sensitive; other data is not.

Artificial intelligence can demolish that distinction. Modern AI systems do not merely collect information; they infer. From facial expressions, voice tone, browsing patterns or location trails, algorithms can predict mental health risks, sexual orientation, political leanings, religious feelings, and vulnerability to manipulation. This is emotional AI at work, deriving mental outlooks and psychological states from micro-expressions. Predictive analytics can label someone as risk based on behavioural patterns. The privacy harm no longer lies in what is collected, but in what is concluded.

The recent Bunnings (a superstore) facial recognition case heard before the Administrative Review Tribunal (ART) reveals this kind of fault line. Between 2018 and 2021, the retailer deployed facial recognition technology in dozens of stores to deter theft and violence. In 2024, the Office of the Australian Information Commissioner (OAIC) found the company had breached privacy law by collecting sensitive biometric data without consent and failing transparency obligations. But in February 2026, the ART partly overturned that ruling, finding the system could operate without consent where necessary to address serious crime risks.

Even when images are not permanently stored (as was Bunnings argument), real-time scanning can generate identity matches and risk classifications. The intrusion is not limited to the capture of a face; it lies in the algorithmic judgement attached to it. A person becomes a profile, a prediction, a probability.

This exposes the deeper weakness of Australia’s list-based framework in privacy legislation. Sensitivity today is not inherent in the data; it emerges from processing and context. Ordinary data, when fed into powerful inference engines, can produce extraordinary and deeply personal conclusions — with consequences ranging from exclusion to discrimination. Then, the law’s commitment to technological neutrality now looks more like technological blindness.

The argument now is that reform must shift from protecting predefined data categories to regulating harmful uses of inference. If AI system developers or those who commission the development can reasonably foresee discrimination, psychological profiling, or manipulative targeting, the law should intervene to put in place protective barriers regardless of the input data’s label (i.e. sensitive or ordinary). The OAIC’s guidance recognising that inferred information can itself be “personal information” is a start. But guidance cannot substitute for statutory clarity.

Without modernisation, the Privacy Act won’t keep up with an era where insight/inference, not input, defines power. Privacy protection must evolve from guarding data types to guarding people from algorithmic consequences. Only then will the law match the technology it seeks to restrain.

Case access:

Bunnings Group Limited and Privacy Commissioner (Guidance and Appeals Panel) [2026] ARTA 130 (4 February 2026) https://www.austlii.edu.au/cgi-bin/viewdoc/au/cases/cth/ARTA/2026/130.html

https://substack.com/@macropsychic/note/c-213481547