Human Freedom in the Age of Predictive Technologies

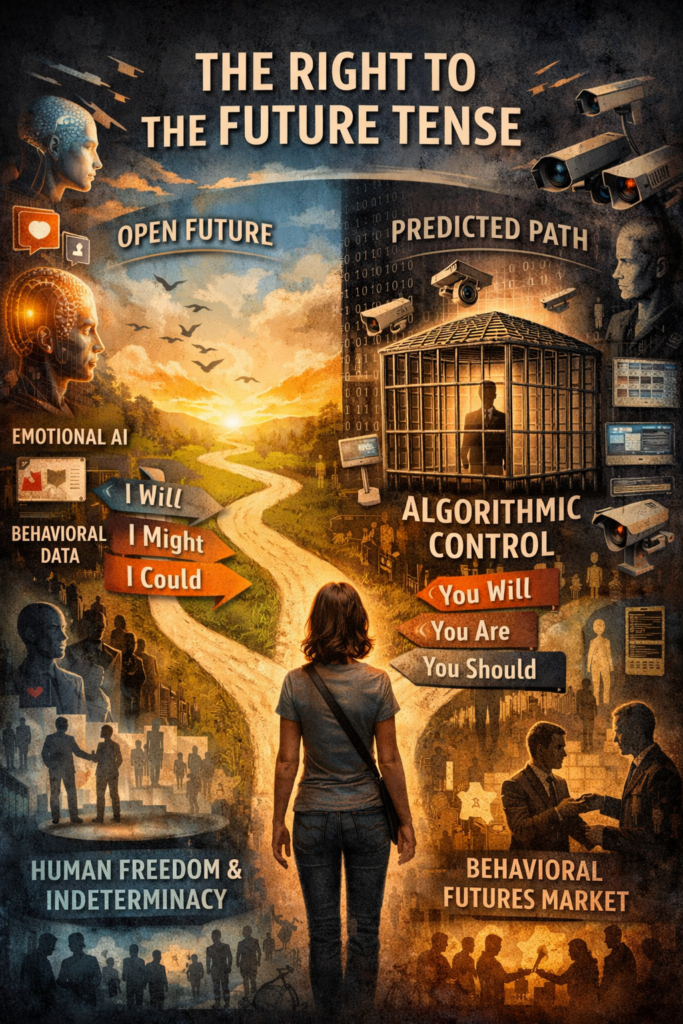

The idea of a right to the future tense emerged in the late 2010s alongside growing concern about data-driven prediction and behavioural control. It has been most notably articulated by Shoshana Zuboff in The Age of Surveillance Capitalism (2019). Zuboff introduced the concept to describe how large-scale digital surveillance and predictive analytics threaten a core condition of human freedom: the ability to live into an open, self-authored future.

While not framed initially as a formal legal right, the idea arose from critical analysis of how digital platforms convert human experience into behavioural data and sell predictive insights into ‘behavioural futures markets’, enabling subtle forms of pre-emption and control. The concept quickly resonated beyond sociology, gaining traction in legal, constitutional and AI governance debates as algorithmic profiling, predictive policing, automated welfare systems, and emotional AI. Emotional AI refers to aspects of technologies, including social media, that can detect, classify and respond to users’ emotional lives. This often involves artificial intelligence systems that analyse signals regarding human states, such as facial expressions, voice patterns, physiological data and various behavioural cues.

Pseudo-real conditions versus actual real conditions

What this really means is that individuals are increasingly being acted upon (even in virtual environments when interacting on digital platforms), and decisions are being made about them, based on forecasts rather than real choices. It is in these kinds of contexts that the right to the future tense has been promoted as a normative response to predictive technologies. It is an attempt to articulate, in rights-based terms, the need to preserve human indeterminacy, autonomy and the freedom to become something other than what data-driven systems predict.

Indeed, it can readily be suggested that the right to the future tense represents a profound and increasingly urgent proposed extension of human rights in the digital age. The concept actually captures a foundational aspect of human freedom, being the ability and capability to inhabit an open, undetermined future. Essentially, to imagine, choose, change and shape one’s life trajectory without those possibilities being systematically pre-empted or constrained by powerful external systems. As artificial intelligence and data-driven responses become pervasive (which they seem to be doing), what is happening is that one’s elemental claim on the future is under growing threat.

To highlight this kind of problem, the metaphor of the ‘future tense’ is an impactful way of drawing interest and force from the language used. In grammar, the future tense expresses possibility, intention and becoming: I will, I might, I could. Does this not reflect a real truth that human life depends on indeterminacy? Compared to the untruth of: you will, you are, you should, as told by algorithmic control and prediction, and emotional AI.

Certainly, Zuboff suggests that AI-driven systems undermine this real condition of human life by converting lived experience into behavioural data. What is the problem with that? The problem here is that the data are then transformed into predictive products sold into behavioural futures markets, where corporations, governments and other actors (including transnational criminal networks) purchase probabilistic knowledge of what individuals are likely to do, feel or desire. Then comes the real problem — a type of pseudo-culture! The result is not merely observation but intervention that nudges, shapes and steers behaviour in ways that serve the interests of corporations, authoritarian governments and even criminals, rather aiding an individual’s self-determination or self-actualisation. What is eroded in this process is the freedom to decide one’s future and to design the path toward it.

Need for protection against the pseudo

A right to the future tense seeks to protect individuals against the technologies, institutions and actors mentioned — all of which seek to fix people into predetermined categories. It is both a reaction and a response to algorithmic profiling that generates persistent digital representations. This includes risk scores, reputational rankings, behavioural predictions, and also fixations in digital form that can effectively stay for the whole of one’s life somewhere on the internet (as if that past fixation is permanent and unchanging) and contrary to a ‘right to be forgotten’.

The outcome is that this increasingly governs or determines access to opportunities. Rather than responding to what a person has actually done (or has real human potential to do based on actual capabilities), AI systems act on inferences about what the person is predicted to do or become, as if it is almost absolute truth. This shift from actuality to prediction narrows the horizon of possibility, producing futures that are effectively written in advance through automated, self-reinforcing interventions like echo chambers.

These dynamics are evident across multiple domains. In particular, AI-driven profiling in employment; predictive policing that targets individuals or groups before any wrongdoing occurs; and automated credit and welfare systems that deny resources based on historical correlations. Generally speaking, none of these practices are illegal per se, but each can become illegal depending on how it is designed, deployed and governed, particularly under anti-discrimination laws, human rights law, privacy law, and also challenges based on administrative law (e.g. bias, procedural fairness, taking into account irrelevant considerations, and unreasonableness). As already mentioned, we can go further and refer to emotional AI that infers inner states to manipulate choices in real time, as well as the permanence of digital records that create enduring shadow selves.

Across all these contexts, prediction increasingly displaces agency, relocating power from individuals to computational systems. This shift constrains individual autonomy by shaping decisions before conscious reflection can occur, reducing the space for self-directed choice. At the same time, it undermines authenticity, as individuals are nudged to conform to algorithmically inferred identities rather than freely expressing and revising who they are over time.

A right to the future tense, even though expressed as a metaphor, would safeguard several interconnected dimensions of human flourishing (eudaimonia or the good life) that require autonomy and authenticity of self. Dimensions such as: personal development and self-determination; the freedom to revise beliefs, identities and life plans; protection against permanent digital judgement; and cognitive and emotional autonomy. These protections are especially critical for children and marginalized groups, whose futures are most vulnerable to premature classification.

Implicit recognition of right to the future tense

While not yet recognised as a standalone legal right, the concept of right to the future tense resonates strongly with established constitutional and human rights principles, including dignity, autonomy, freedom of thought and privacy. Contemporary regulatory frameworks, such as the EU’s risk-based AI governance, now also reflect these concerns implicitly. The EU AI Act, for example, adopts a risk-based approach that restricts or prohibits AI systems which pose unacceptable risks to fundamental rights, including certain forms of biometric categorisation, social scoring and emotion recognition in sensitive contexts such as education and employment. These restrictions implicitly acknowledge the danger of predictive and affective technologies that pre-empt individual behaviour or fix people into algorithmic identities.

The EU’s General Data Protection Regulation (GDPR) also provides safeguards, such as purpose limitation, data minimisation, the right to rectification, and the right to erasure. Each, in some way, protect individuals against permanent or determinative data profiles that could foreclose future opportunities. Reference should also be made to, and recourse may even be required to, certain values that are explicitly protected under the Charter of Fundamental Rights of the European Union (2000/C 364/01), notably Article 1 (Human dignity), Article 7 (Respect for private and family life), Article 8 (Protection of personal data), and Article 11 (Freedom of expression and information). Each of these provisions guards against forms of external control or intrusion that would unduly constrain an individual’s capacity to shape their own life course.

A necessary conclusion

Ultimately, asserting a right to the future tense affirms that people must be allowed to become, not merely be predicted or managed. As predictive technologies grow more powerful, preserving indeterminacy is essential to ensuring that human dignity, agency and freedom endure in an age increasingly defined by computation. Without such protection, and individual’s future risks being reduced from a realm of wide possibilities into a domain of algorithmic control.

https://open.substack.com/pub/macropsychic/p/the-right-to-the-future-tense