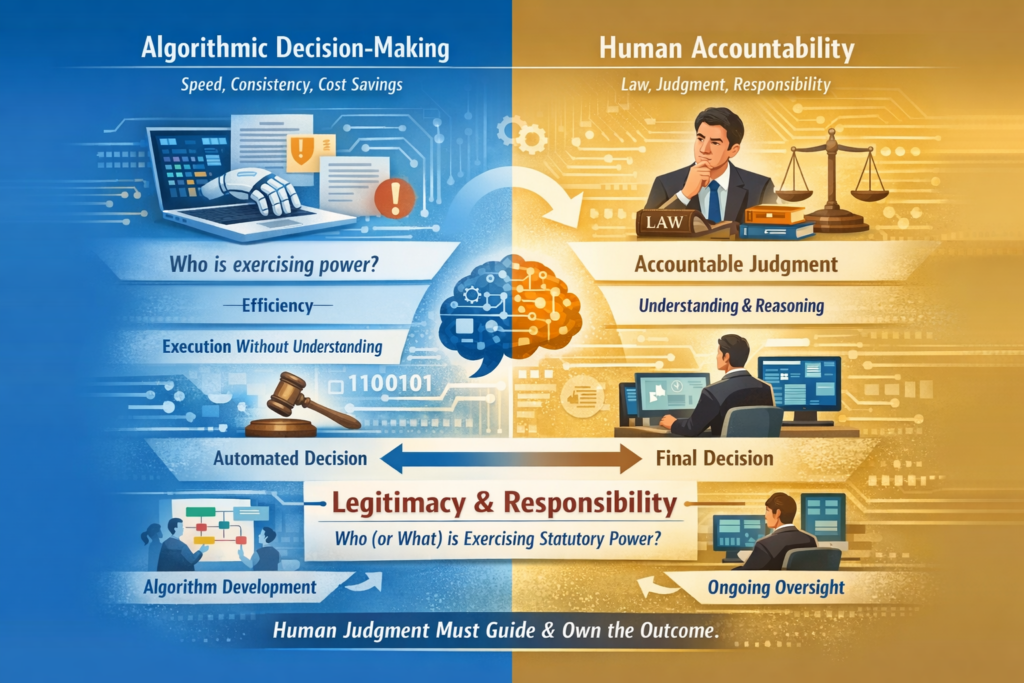

Public sector automation is often framed as an efficiency reform. Governments promote algorithms that process welfare claims, assess risks, and issue infringement notices. Some reasons are speed, consistency and cost savings. But efficiency is not the legal test. Public law asks a deeper question: who, or what, is exercising statutory power? Legitimacy depends not on computational accuracy but on accountable judgement.

Administrative law has long presumed a human cognitive model of decision-making. Statutory powers are entrusted to officials capable of interpreting legislation, weighing relevant considerations, and forming a genuine ‘state of satisfaction’. This is not a metaphor but a legal requirement: the decision-maker must actively engage with the law and the facts.

However, deterministic algorithms apply fixed rules, and machine-learning systems generate statistical predictions, but neither understands the law it applies. Without human cognition, there is no interpretation—only execution. However, involving human cognition can also be before the algorithm is deployed (i.e. in its development) that will eventually (or soon) effectively make a decision. This is different to the usual case of human cognition being used when making an actual decision.

The human cognition principle also underpins the duty to give reasons for a decision. Lawful decision-making requires intelligible justification. Reasons demonstrate that statutory power was exercised through legal reasoning, not mechanical output. Statutory power requires a conscious mental process. A computer system cannot form intention or assume responsibility. Its outputs acquire legal meaning when adopted by a human officer accountable for the decision. Again, however, a human officer can be surely accountable for the reasons why an algorithmic decision-making system is deployed in the first place. That is, there is human accountability in automated decision making before an automated decision is made.

This means modern administrative law does not entirely, and cannot practically, simply reject automation outright. Instead, it can recognise that human cognition enters earlier in the process: in the design, training and implementation of algorithmic systems. In this process, humans select variables, define objectives, interpret statutory criteria, and determine how outputs are used. These upstream choices embed human judgement into automated systems. But public law still requires some ongoing human engagement – such as a manager of the system – to ensure that outputs are monitored, evaluated and justified per the particular circumstances.

The lesson is not that algorithms have no place in government, or in public decision-making (administrative law), but that they cannot replace human responsibility. Automation can assist decision-makers by organising information and improving consistency. But public power must remain traceable to a human mind, or an agency compromised of human minds, capable of explanation and accountability. The rule of law demands more than technically correct outputs. It requires decisions grounded in legal reasoning and owned by accountable officials.

If government becomes a system where decisions emerge solely from automated processes, responsibility dissolves into code. Public law insists on something different: that the exercise of power remains, ultimately, a human act. Without a human mind(s) capable of understanding, explaining and owning the decision, authority loses the very accountability that gives it legal legitimacy.

https://substack.com/profile/152321377-perspective-undercurrents-pu/note/c-216657410